Projects:

AI & Typology – Yanfeng Chen, Xiang Yin, Dongjian Yuan

For thousands of years, people always have a perceptual recognition of churches although the configuration of churches varies within history. The essence of religious spirit does exist in the space of the churches but it is never detected and summarized as a design method. And nowadays, ritual space is gradually diversified in the modern church due to the flexible modernity and technological development.

Inspired by Aldo Rossi’s architectural typology theory, we conclude “church” is a “type” that we intend to train AI to learn the basic logic within it. AI can generate new styles of church by using neural networks. Church is always the place of spiritual aggregation of humans, as Rossi mentioned the best way to understand a city is finding the collective memory. That’s also the main concept that narrates why we tend to research churches and their ritual space. Therefore, we wonder could AI learn this historical typology of churches and develop their own method of the “type and style” in the new digital era. We seek silence, quietude, rituals, and symbolism, not only in form but in sentiment. Our project aims at creating new church spaces by training the AI to learn hundreds of church models, and by the way of 3D GAN.

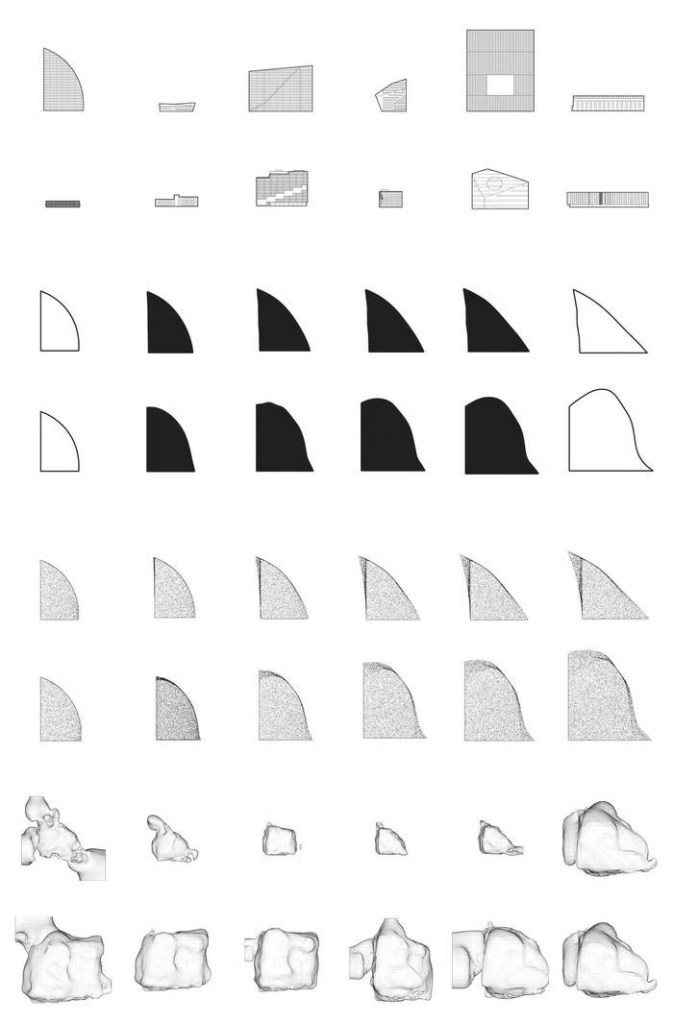

Reading through Aldo Rossi’s theory of Typology, we realize the central concept of “type and style.” “Type” is a permanent logic that is prior to form; “style” is the changing morphology within the history. We are also inspired by Professor Matias del Campo’s article about “type and style”. “Type” is the starting point, and “style” is an instrument that identifies the changing characteristics and the passage of time within historical architectural production. Over decades, many architects, researchers, or photographers worked a lot in architectural typology research. German photographers Bernhard Becher and Hilla Becher exhibit typological documentation photography of industrial facades, which represents the human way to clearly build a database and could be used by AI to learn its inherent type. Aldo Rossi also proposed an analogical city presenting the historical geometry learning and the new city design. Thus, we conclude the architectural typology as three steps: 1. Concrete (documenting the cases of a specific type); 2. Abstract (comparing, classifying, summarizing); 3. Concrete (use logical principle of “type” and characteristics of “style” to generate new cases). Here, AI Deep Learning serves as a typological design method. Database, deep learning, and generating is equal to architectural typology of documentation, learning “type,” and transferring “style.” AI & Typology picks “church” as a specific architectural type to study the application of artificial intelligence in the architectural typology research. Church is a building type used for Christian worship services and other Christian religious activities. The historical church styles are driven by cultural sympathy, functions, creativities and distinctive materiality. This style defines artistic achievements in various terms, and consists of great flexibility to show the continuous transformation in history. Because of the scope of this semester, we limited our research objects to two styles. Gothic churches obtain uniform and decorated styles, while modern churches are more flexible and geometry formed. We think these two styles represent two distinct directions in the development of history to represent the basic type of church architecture. By collecting 2000 church models (1000 Gothic churches and 1000 modern churches), we start building a church database, forming a workflow, and experimenting AI training results. A 3D PointCloud GAN called TreeGAN is what we use for generating a new church exterior prototype without labeling. We also use 2D to 3D Neural Style Transfer to attach the contextual texture of Manhattan onto the exterior prototype surface to get our final church exterior. But TreeGAN can only be used for exterior generation. For church interior, we decide to use 2D to 2D Neural Style transfer to generate desired church images and use 2D to 3D Neural Style transfer to apply them onto interior architectural components, like vaults or columns, to get the final church interior. Then, we will combine them to get our final church.

AI & Typology investigates the existing churches circumstances in Manhattan. The congregations have started to notice that one of the best ways to make their churches stay active and open is to sell the property for demolition. Although the Gothic architecture has long been admired, the interior infrastructure is crumbling. Because of that, the old churches’ leadership started to strike a deal with the developers to demolish the building and use the land to construct some other types of architecture, like residential buildings. However, the number of the Christans in Manhattan is increasing every year, and the demand for churches is very huge. Our target church is the Norfolk Street Baptist Church, which was built in 1848. It still has all the wonderful Gothic Revival touches of a mid-19th century church. But in 2012, the congregation asked the landmarks commission for permission to tear down the synagogue and sell the land to developers. Till now, the request is still on hold. Also citizens are unsatisfied with the reconstruction projects because they may destroy the historic urban texture of New York. So we are trying to use AI to generate a new church so that it could substitute the old structures in the same place. The new church will not only meet the demand of Christians, but also it will respond to the local history background and architectural style.

aggreg[AI]te-regener[AI]te – Alex Salvadero, Andrew Yang

aggreg[AI]te-regener[AI]te focuses on creating an AI-driven process of building material reclamation and re-use. Using Detroit as a proving ground for developing this process, the project will investigate the growing number of home demolitions within the city and seek to provide new life for these vacant buildings, while also providing much needed re-densification of Detroit neighborhoods. The project will develop a speculative workflow which imagines how machine vision can be used to complete the multitude of necessary tasks from demolition to integration of recycled building materials. As a proof of concept for this workflow, we will test strategies for implementing specific tasks through AI neural networks, serving as preliminary versions of how machine vision can have future implementations.

Near the beginning of the 20th century, Detroit became the center of the automobile industry with a huge number of jobs available, attracting both large numbers of overseas immigrants as well as African Americans from the South. Detroit also became one of the prominent cities for wartime production during World War 2. This cemented Detroit as one of the largest and most prosperous cities in the country, ballooning the population to nearly 2 million residents by 1950. To support this large population and on the heels of high automobile ownership, thousands of single family detached homes were built, coming to nearly define 20th century suburban sprawl in the US.

In the past several decades, Detroit has gone through what can be described as an identity crisis resulting from social and racial strife and subsequent economic decline from plummeting population and increasing housing vacancy and dilapidation. Population has fallen from a peak of nearly 2 million residents in 1950 to just 670,000 in 2019. This has led to a huge amount of housing vacancy rate in the city, making it difficult and extremely inefficient to provide essential services when there are very few residents in a given area. Since 2014, the City of Detroit has used federal tax dollars to demolish more than 15,000 homes between 2014 and 2020 with a total project cost of $265 million dollars. There are still an estimated 22,000 blighted homes left to be demolished and, in 2020, voters passed a $250 million bond issue to demolish an additional 8,000 homes and renovate 8,000 others. The large effort to demolish older, abandoned homes to remove blight from Detroit’s neighborhoods is necessary for Detroit’s future, however this also has the effect of eliminating a large portion of Detroit architectural history and identity. Our project is a recycling project from two standpoints – the first is recycling building material which can allow a more sustainable building future. Using machine vision to create novel ways to combine used materials into new components, this process can reduce demand for newly manufactured materials to supply our building needs. The second element our project seeks to recycle is architectural history itself. Through utilizing machine vision to synthesize Detroit home styles used commonly in the past, the process can give new life to Detroit’s identity and allow its past to continue informing its future. Recycling is the process of using specific separate elements and utilizing them coherently within a new form. This sense of recycling is through architectural features from separate home styles being utilized in a single synthesized Detroit-style home. While this project focuses on the subject of Detroit, this can be considered only a single case study of the application of Artificial Intelligence’s possible future impacts on Architecture. The project itself begins to investigate how AI can enhance and preserve human history.

Augmented Machine Vision – Jacquelyn Hecker, Peter Recht

Over the last few years, the proliferation of artificial intelligence applications in architectural design has led to conversations around authorship and agency in collaborative human-machine design processes. In 2016, Mario Carpo argued that we had arrived at a second digital turn in architecture – one defined by data surplus rather than data scarcity.1 We are now able, as designers, to work in this data rich environment by using machine vision in architectural design processes. Through unsupervised learning, neural networks can learn latent features of large datasets and generate novel outputs for a designer to evaluate. This is a fundamental shift in how we use digital tools not only for production but also design. By working in this way, this thesis leverages three dimensional latent space unsupervised learning to train a neural network on point cloud models and generate novel architectural form.

By training neural networks on purely architectural data sets they can begin to learn latent spatial and organizational patterns in everything from floorplans, construction details, building regulations in a specific region, and even 3D architectural solutions. The opportunities that machine vision brings to design can ultimately change the existing workflows and representation within architecture. These opportunities include the introduction of unsupervised learning

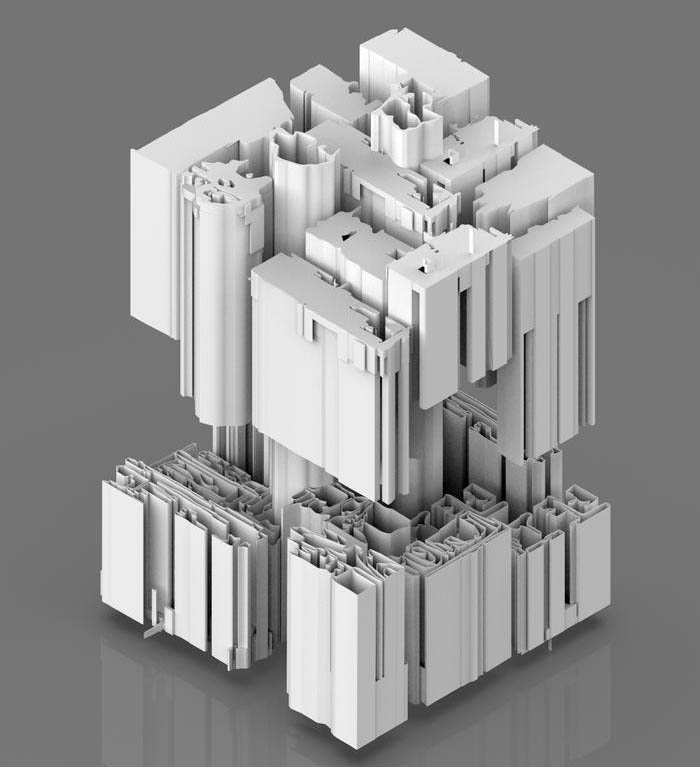

Traditional 3D architectural representation techniques epitomize the 3D world into two dimensions. This culture has culminated in BIM where coordination, synthesis and standardization take precedence to form an efficient process, for the production of architecture. New design languages have the potential to arise through the homogeneous segmentations of data within unsupervised learning.

Our work builds on 2D and 3D Generative Adversarial Networks as outlined by Goodfellow et al2 and Shu et al3 respectively. GANs such as 2D Neural Style for 2D image generation tasks have since been widely studied across many disciplines. GANs for 3D point clouds generation have rarely been studied in the computer vision field, yet alone the field of architecture. Three-dimensional geometric data offer an excellent domain for studying generative modeling.

Through the use of Tree-GAN, we generate novel point cloud outcomes from a modelled dataset of workspaces and offices using unsupervised learning. Point clouds are a homogeneous, expressive and compact representation of surface based geometry, with the ability to represent geometric operations while taking up little space. This design technique, whose mainstay is Tree-GAN, which has the ability to learn architectural features based on a three dimensional point cloud dataset. Tree-GAN can understand and generate semantic parts of objects without any prior knowledge by sampling a learnt latent space. It contains two networks, a discriminator and a generator. The generator, which is a tree structured graph convolutional network (Tree-GCN), performs graph convolutions based on tree structures. The Tree-GCN utilizes ancestor information from a tree and employs multiple supports to produce and represent 3D point clouds as outputs. The discriminator differentiates between real and generated point clouds to force the generator to produce more realistic points. The number of models used for training the GAN is crucial. For our thesis, a dataset of 960 workspaces was modeled to train TreeGAN.

This thesis seeks to understand the perception of machines through unsupervised learning and generative modeling. Several 3D generated language difficulties arise when interpreting the training results (Point Cloud-to-voxel; Point Cloud-to-mesh; and Point Cloud-to-Point Cloud). Through the process of curating a database of workplaces, training a neural network and generating new spatial outcomes, this thesis seeks to question disciplinary assumptions about agency and authorship in the design process as well as reflect on the ethics of designing in a data rich environment and the kinds of cultural values that reflect.

1 Carpo, Mario. The Second Digital Turn: Design Beyond Intelligence. Writing Architecture. Cambridge, Massachusetts: The MIT Press, 2017.

2 Goodfellow, Ian J., Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David Warde-Farley, Sherjil Ozair, Aaron Courville, and Yoshua Bengio. Generative Adversarial Networks, 2014.

3 Shu, Dong Wook, Sung Woo Park, and Junseok Kwon. “3D Point Cloud Generative Adversarial Network Based on Tree Structured Graph Convolutions.” CoRR abs/1905.06292 (2019). http://arxiv.org/abs/1905.06292

[AI]rport – Bruna Iunes Sanches, Kylie Williams, Jun Zhou

[AI]rport aims to generate an airport terminal using an artificial machine, trained on existing videos of airports and our own architecture intuition.

Using ideas from Mario Carpo’s The Second Digital Turn, we conclude that the ever increasing abundance of big data has not only changed our ways of making, but has “introduced a new culture and economics poised to change our ways of thinking”. A machine’s ability to search through a number of items in milliseconds without sorting, coupled with its ability to learn labels and patterns, makes an artificial intelligence capable of becoming a design tool that not only finds a solution, but generates it as well.

One of the goals of a design tool like this is to increase the speed at which architecture can respond to things happening in our world. We believe the current COVID pandemic will greatly affect the future of design, especially that of airports. Along with this, as climate change becomes more urgent and ecological commitments increase, so does “flying shame”. This changes the role of the airport from everyday vacation traveling to a more ecologically aware, need-base traveling. While this tool will be able to generate a terminal for any city’s needs, we chose Xiong’an as our site for its focus on new technology and sustainability efforts.

By collecting and captioning videos of existing airport interiors, we are able to create a dataset of frames based on the timestamp of each caption. Our captions were developed on three categories – Space, People, and Abstract – encompassing things like spaciousness, geometry, modularity, and light qualities. By constraining the categories, we are able to teach the machine to understand physical qualities of a frame without having to label every element in the image. The training captures a variety of airport scales and locations across the globe. The goal is to capture desirable qualities of all types of airports and have the machine translate them into a small terminal based on what it understands these qualities to be. To encode our intuition means to capture more than just what’s visible in the images, and include aspects of beauty and possible usage as well. The output will be generated through a sentence input. These sentences will describe qualities we wish the final space to have as one “walks through it”.

After researching our site and aspects of airport design, we developed goals and keywords of qualities to include in our generated terminal. These focus on the same three categories as the training captions. It’s important to understand that the only control we have of the output is through the training and input sentence, but we cannot accurately predict what the machine will generate based on its understanding of the training words. For the generated output to be a 3-dimensional model, rather than an image, we need to ensure it encodes position and time consistency when generating sequences of images. The sequence can then be made into a video, which can be made 3-dimensional by predicting depth information based on the encoded positions.

While only our terminal will be developed by the a.i., our airport is more than a typical traveler’s aid – it is a new flight technology hub. Along with a passenger terminal, it will contain training grounds for new airplanes and aerial rescue training, an Ehang (autonomous aerial vehicles) center and command station, a general aviation terminal for private planes, and connection to the underground infrastructure being built in Xiong’an. Our design proposes this as a system to connect to Xiong’An’s city center. Other than through E-hangs, the connection to the underground infrastructure will create access for freight carriers and autonomous passenger vehicles. For this tunnel, we propose a Metro line going under the Baiyang Dian Wetland to connect Xiong’an and the airport. This tool will not only be a design tool that helps architects find novel solutions based on big data, but it will also be a novel tool to GAN developers and users. Before this, generation was done by scraping the whole internet. There was no specific aim or purpose. In this case, we are using tools from deep learning to encode the intuition of an architect. This is also the beginning of “open world” generation. Open world is the term used to describe the complexity of the scene. Rather than an enclosed single object with a bounding box, it is more closely related to the complexities and different variables that we see in the “real” world. We are tackling the variability of complex scenes end-to-end. Training with full scenes, we are able to type an input and get the output. We tackle these problems of extra data and annotations by constraining the parameters of our captions, based on the categories previously mentioned. Some more novelty will also come when generating the final output, once the 2D training is complete, going from image sequence to video, and eventually to 3D. Making use of a.i.’s ability to navigate large quantities of data and recognize patterns through captions, we can train it with our own “intuition”, tapping into new ways of making and thinking. This will help the field of architecture engage with big data as not only an informant, but a tool of design, making its response faster and therefore more relevant to happenings of the world around us.

Anexact Building – Yuchun Huang, Yuxin Lin

The goal of Anexact Building is to demonstrate the ability of a Neural Network to integrate the architectural language of form through texts, and its potential to interpret the texts into a building under the topic of the coexistence between technologies and humans. The form of Anexact Building is not exact, or wholly to be simplified, but it is able to be reduced on a local level. It is through anexact forms that architecture takes a step towards becoming more heterogeneous: more like writing.

Since Machines are destined to compensate each other with humans, Anexact Building is motivated by future building patterns based on human and machine logic – Swarm Intelligence. It is also an experiment on exploring the purpose of design by bringing together all parts of a design problem, breaking them apart along the joints to better understand and recombine them using the power of the complexity of language.

Anexact Building is based on the texts describing architecture space design (160 Pattern Lists, 4720 Sentences) and nest-building behavior within Swarm Intelligence (36 Article Lists, 2250 Sentences). Looking for inter-relationship and connecting the same symbols to organize the compositions as a whole, all the texts in the dataset are arranged in the format of A Pattern Language1 and then trained with GPT-2 Algorithm2 to merge the swarm intelligence with architecture by inter-relating them together. To trigger the conversation with the machine after training, System Prompts referring to the interacting elements are composed to establish the holistic system within the context. The interacting elements are generated by mixing texts dataset of 4 architecture space types with 9 SI space types. Attentional Generative Adversarial Networks3 (AttnGAN) is the other algorithm to visualize the dialogues from GPT-2. Every sentence can be related to an image representing itself by using the algorithm, and every image will be interpreted by human intelligence into assembly unit models. The unit models will be pieced together into the Anexact Building. Finally, Anexact Building will include multiple functions of shopping, hotel, gardens, and apartments as a mixed-use building.

The output of Anexact Building is an experiment of combining texts, visualizing machine logic and artificial intelligence communicating with human intelligence. The process of designing Anexact Building provides the possibility to include the need from innate and intuitively derived feelings about spaces and places. In the aspects of language including semantic, allegory, and poetics that generate feelings and emotions, there is a sense of order inside. They could extend beyond the structural, topological, and syntactic aspects of the whole process. The next generation of the algorithm could be able to interpret output sentences into images automatically and then it could generate dialogue within the image database. Image discrimination can be automated by semantic segmentation or object detection in the future.

1 Alexander, Christopher, Ishikawa, Sara, and Silverstein, Murray. “A Pattern Language : Towns, Buildings, Construction.” Book. New York: Oxford University Press, 1977.

2 Radford, A., Jeffrey Wu, R. Child, David Luan, Dario Amodei and Ilya Sutskever. “Language Models are Unsupervised Multitask Learners.” (2019).

3 Xu, T., Pengchuan Zhang, Qiuyuan Huang, Han Zhang, Zhe Gan, Xiaolei Huang and X. He. “AttnGAN: Fine-Grained Text to Image Generation with Attentional Generative Adversarial Networks.” 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition (2018): 1316-1324.